After AI Agents: What Actually Comes Next for Users

Everyone is building AI agents. OpenAI, Anthropic, Google — the race is on to ship tools that don’t just answer questions but take real actions across your workflows. Book flights, manage your inbox, deploy code, close deals. The pitch is seductive: stop prompting, start delegating.

But here’s the question nobody in the product demos wants to touch: what happens when agents become normal? When “AI that does things for you” is just the baseline, what’s the next thing users actually want?

A recent discussion on r/ArtificialIntelligence posed exactly this, and the 60+ comment thread revealed something interesting — the conversation wasn’t about more automation. It was about better control.

Why Agents Feel Like a Transitional Phase

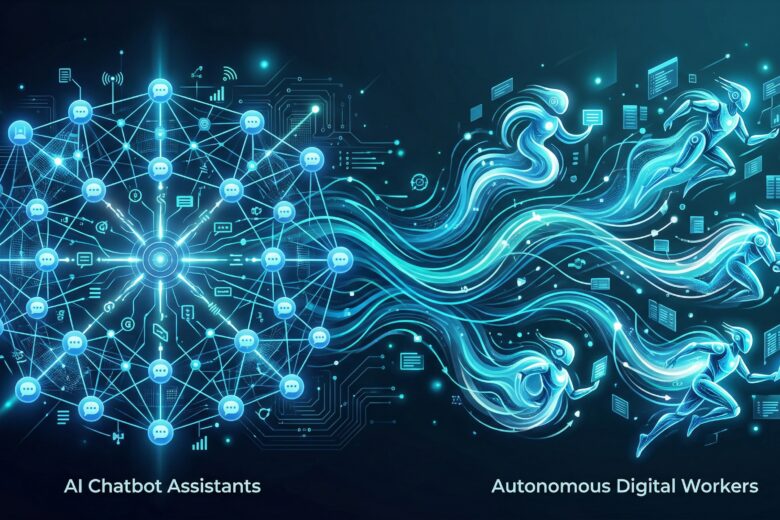

Agents are compelling because they solve a real frustration: chatbots that talk a lot but can’t do anything. Moving from “answer my question” to “handle this task” is a genuine leap.

But spend time using agent-based products and the cracks show fast. They misunderstand intent. They get stuck in loops. They make decisions you wouldn’t make, and they don’t always tell you they did. The excitement around agents is real, but it’s also partially relief that we’re finally moving past the chatbot era.

Gartner projects that 40% of enterprise applications will embed task-specific AI agents by the end of 2026 — up from under 5% in 2025. That’s explosive adoption. But scale reveals problems that demos hide.

The Control Problem Nobody’s Solving Yet

The most upvoted ideas in the Reddit thread weren’t about autonomy — they were about guardrails. Users want configurable risk tolerance. They want to say “handle my emails, but ask before replying to my boss” and have that actually work. They want agents that know when to stop.

This aligns with what’s happening in enterprise. As Raconteur reported in March 2026, governance tooling for autonomous agents is now reaching the market — audit trails, rollback mechanisms, accountability frameworks. These aren’t sexy features, but they’re what separates a demo from a product you’d trust with real work.

The pattern is clear: the next shift isn’t more autonomous agents. It’s controllable agents.

What “After Agents” Actually Looks Like

Based on the signal from users, builders, and the enterprise market, here are the five directions that matter:

1. Risk-Aware Agents with User-Defined Boundaries

Think of this as a permissions model for AI. Not a simple on/off toggle, but granular controls: “handle scheduling autonomously, but confirm any purchase over $50.” The technical challenge isn’t small — it requires agents that can assess risk in context — but the demand is unmistakable.

2. Persistent AI Coworkers, Not One-Shot Assistants

Current agents run a task and disappear. Users want continuity: an AI that remembers your preferences, learns from corrections, and builds working context over weeks and months. The shift is from transactional to relational.

3. Multi-Agent Coordination That Doesn’t Require a PhD

Orchestrating multiple specialized agents is powerful but currently requires technical skill. The next wave makes it accessible: drag-and-drop workflows where a research agent feeds a writing agent, which hands off to a review agent, all with human checkpoints built in.

4. Reasoning Models as the Infrastructure Layer

The real breakthrough underneath the agent trend is reasoning. Models like OpenAI’s o1 and its successors don’t just pattern-match — they work through problems step by step. As ByteByteGo documented in their 2026 trends analysis, reasoning capabilities powered by Reinforcement Learning with Verifiable Rewards (RLVR) are becoming standard across major AI labs. This is what makes agents that can actually plan, not just react.

5. Human-in-the-Loop as a Feature, Not a Bug

The most sophisticated agent systems in 2026 aren’t the most autonomous ones. They’re the ones that know exactly when to ask for human input. This isn’t a limitation — it’s a design principle. Finance, healthcare, and legal sectors are adopting agents specifically because the new governance tools let them define exactly where human oversight kicks in.

The Uncomfortable Truth About Agent Hype

There’s a gap between what agent marketing promises and what users experience. The Reddit thread is full of people who’ve tried agent-based products and found them simultaneously impressive and frustrating. They can do surprising things. They also fail in surprising ways.

This isn’t a reason to write off agents — it’s a reason to be honest about where we are. We’re in the early-adopter phase where the technology is real but the reliability isn’t. The companies that win won’t be the ones promising full autonomy. They’ll be the ones that make partial autonomy feel safe and predictable.

What Users Should Actually Do Right Now

If you’re evaluating AI agents — for personal productivity or business workflows — here’s a practical framework:

– Start with bounded tasks. Don’t give an agent your entire email workflow. Give it meeting scheduling. See how it handles edge cases.

– Test the failure modes, not just the happy path. Every agent demo looks great. Try breaking it. See what happens when instructions are ambiguous.

– Prioritize observability. If you can’t see what the agent did and why, you can’t trust it. Look for tools with clear audit trails.

– Define your risk threshold explicitly. Write down what you’re okay with the agent deciding on its own, and what requires your sign-off. Use that as your evaluation criteria.

– Don’t wait for the “perfect” agent. The technology is moving fast enough that today’s rough edges will be smoothed out. Build familiarity now, but don’t bet critical workflows on it yet.

The Bottom Line

AI agents are a real and important shift. But they’re a means, not an end. The next wave isn’t about agents that do more — it’s about agents that do exactly what you need, no more and no less, with the transparency to prove it.

That’s less exciting than “AI runs your entire business.” It’s also more useful.

—

References:

- Reddit discussion: [If “AI agents” are the current trend, what’s the next shift from a user perspective?](https://www.reddit.com/r/ArtificialInteligence/comments/1s9q7xj/if_ai_agents_are_the_current_trend_whats_the_next/) — r/ArtificialIntelligence, April 2026

- Raconteur: [Autonomous AI agents 2026: the new rules for business governance](https://www.raconteur.net/technology/autonomous-ai-agents-2026-the-new-rules-for-business-governance) — March 30, 2026

- ByteByteGo: [What’s Next in AI: Five Trends to Watch in 2026](https://blog.bytebytego.com/p/whats-next-in-ai-five-trends-to-watch) — March 2026