TL;DR — Key Takeaways: Autonomous AI agents now handle multi-step DevOps, support, and research tasks with minimal human oversight. AI coding assistants evolved into agentic partners that plan features, open PRs, and review diffs across entire repositories. Multimodal models processing text, images, audio, and video are mainstream production APIs in …

How Machine Learning Changes Cybersecurity Threat Detection

ML Reshapes Threat Detection Machine learning is reshaping cybersecurity threat detection by analyzing massive volumes of network traffic and endpoint data in real time, identifying anomalies and zero-day exploits that traditional signature-based tools consistently miss. ML models learn baseline behavior patterns and flag deviations automatically, cutting mean time-to-detect from days …

Serverless GPU Cold Starts Take 40s – Here’s How to Fix

The 1000x Latency Gap A cold-start instance on a serverless GPU platform produces its first token after more than 40 seconds. A warm instance generates subsequent tokens in roughly 30 milliseconds. That is a latency ratio of over 1,300:1 between the cold and warm states, and it is the single …

Anthropic Launches Fable 5: Public Mythos-Class Model

Anthropic launched Claude Fable 5 and Claude Mythos 5 today — a Mythos-class model that tops nearly every benchmark. Fable 5 is available to the public via API and Amazon Bedrock at $10/M input and $50/M output tokens, less than half the price of Mythos Preview. Mythos 5, the unrestricted …

Cloud Egress Fees Now Surpass GPU Compute Costs for AI

Egress Fees Outpace GPU Cost AWS charges $0.09 per GB for data transfer out to the internet. A single RAG pipeline processing 10,000 queries daily with 50 KB embedding payloads per request generates roughly 15 TB of egress per month — that is $1,350 before you factor in vector DB …

Google I/O 2026: How AI Agents Replaced the Search Box

Google replaced its 25-year-old search box with an AI-powered interface at I/O 2026. The new “intelligent search box” accepts text, images, files, video, and Chrome tabs, powered by Gemini 3.5 Flash. Instead of blue links, users get interactive AI-generated experiences, custom visualizations, and “information agents” that monitor the web around …

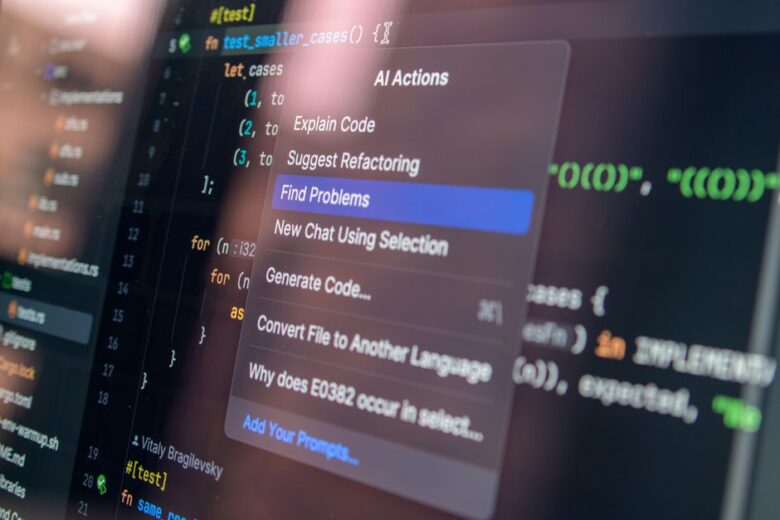

Building Claude Skills: A Complete Developer Guide

What Are Claude Skills Direct answer: Claude Skills are folders of markdown instructions, scripts, and reference files that Claude loads dynamically to handle specialized tasks. They eliminate the need to re-paste context, style guides, or workflow rules into every conversation session. Every time you start a new Claude conversation, you …

LLM Gateways Cut 72% of Wasted API Spend in Production

Wasted LLM Spend: The Gateway Fix Enterprise LLM API spend crossed $8.4 billion in 2025, and the majority of teams hardcode a single frontier model for every request — including the 80% that could run on a model costing one-tenth the price. LLM gateways fix this systematically. A workload of …

Function Calling Accuracy Plummets in Production Workflows

Benchmarks Claim 95%. Production Disagrees. The Berkeley Function Calling Leaderboard (BFCL V4) reports that GPT-4o achieves over 90% accuracy on single-function tool calls. Add a second tool to the context, and accuracy drops by double digits. Add five, and you’re in a different regime entirely. The gap between benchmark function …

Agent Memory Is Just a Vector DB. That’s the Problem.

The Benchmark Numbers Full-context injection into an LLM prompt scores 72.9% accuracy on the LoCoMo benchmark at 17.12 seconds p95 latency. Flat vector retrieval drops to 66.9% accuracy — but cuts latency to 1.44 seconds. That is a 6-point accuracy gap buying a 91% speed gain and a 90% reduction …

Multi-Agent Reliability: 85% Per Step, 20% at Step 10

The Compound Failure Equation Here is the math that most teams deploying multi-agent AI systems have never computed: if each agent step succeeds 85% of the time — a rate most vendors would call impressive — a 10-step workflow completes successfully just 19.7% of the time. That is 0.8510 = …

Inside the DevOps Community in Brazil: Events, Careers, and Skills

Brazil hosts one of Latin America’s most active DevOps communities. This guide breaks down where practitioners gather, the core technical skills the market demands, and how cloud providers, certifications, and AI tools are shaping career paths for Brazilian engineers.