AI Agents Won’t Scale in the Enterprise Until They Learn to Ask Permission

There is a strange gap at the center of the agent boom. Models are getting better at reasoning, tool use is improving fast, and every vendor now has a story about autonomous workflows. But the hardest production problem is not whether an agent can decide what to do next. It is whether the business can trust what happens when that decision turns into a real-world action.

That is why one recent Reddit discussion about a “deterministic authorization layer” for agents felt more important than another benchmark post or model release. It pointed at the part of agentic AI that many teams are still treating as plumbing, when it is quickly becoming product strategy.

The new bottleneck is not intelligence. It is side effects.

In the Reddit thread, the builder behind a project called OxDeAI argues that many real failures happen after the model has already done the clever part. The trouble starts when the agent touches live systems: APIs, payment rails, infrastructure, or any workflow with costs and consequences attached.

The list is painfully familiar for anyone who has shipped automation:

- runaway API usage

- repeated side effects from retries

- recursive tool loops

- unbounded concurrency

- overspending on usage-based services

- actions that are technically valid but operationally unacceptable

That framing matters because it shifts the discussion away from “How autonomous can we make the model?” toward a more useful question: “What are we willing to let the system do without a second layer of control?”

An enterprise can tolerate a chatbot that gives a mediocre answer. It has a much lower tolerance for an agent that opens duplicate tickets, exhausts a paid API quota, or pushes the wrong change into a production workflow three hundred times before anyone notices.

Why the next winning products will feel more restrictive, not more magical

The market still rewards demos that look frictionless. Ask the model. Watch it plan. See it call tools. Get the result. It is compelling because it hides the mess.

But production software rarely wins by hiding every constraint. It wins by turning risk into something legible.

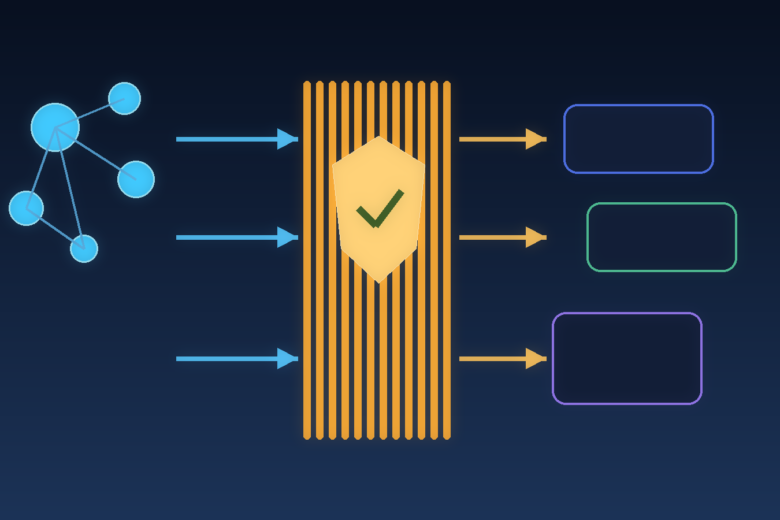

The Reddit post proposes a clean pattern: the agent emits a structured intent, a policy engine evaluates it against a deterministic snapshot, and only approved actions receive signed authorization to execute. That idea is less glamorous than “fully autonomous AI coworkers,” but it maps much better to how serious software systems earn trust.

The same dynamic is visible in guidance from major platform vendors. Anthropic’s advice on building effective agents repeatedly pushes teams toward simpler, more composable systems and warns that extra abstraction can make systems harder to debug. Microsoft’s enterprise guidance is even blunter: without explicit boundaries, governance, and deterministic workflows for critical logic, organizations end up with uncontrolled agent sprawl and operational risk.

That combination is the real signal. The industry’s practical operators are moving in the same direction: less romanticism about autonomy, more engineering around boundaries.

The authorization layer is becoming the new middleware

In the first cloud era, identity, access control, observability, and billing guardrails became mandatory layers because software stopped living in one place. In the agent era, something similar is happening for actions.

An authorization layer for agents does four jobs at once:

1. It translates fuzzy model output into enforceable intent

A model may say, in effect, “I should refund this customer, notify finance, and pause the subscription.” A production system cannot execute “in effect.” It needs a structured intent with fields, limits, and context. That translation step is where governance starts.

2. It separates decision quality from execution safety

This is the big architectural shift. The model can still be useful even when it is imperfect, as long as execution is bounded. That means teams no longer need to solve all reliability problems inside the prompt. They can place hard limits around budget, concurrency, destination, timing, and replay.

3. It creates auditable accountability

If an agent takes a consequential action, someone will eventually ask why it was allowed. “The model thought it was appropriate” is not an answer. A policy decision, backed by a deterministic snapshot and signed authorization, is much closer to something compliance, finance, and operations teams can live with.

4. It turns autonomy into a tunable product feature

This may be the most commercially important point. Once execution rights are explicit, companies can decide which customers get read-only agents, which get approval-based actions, which get capped budgets, and which can unlock higher autonomy. The guardrail itself becomes part of the product packaging.

What smart teams should build before they chase full autonomy

If you are building agentic products in 2026, the practical priority is not another orchestration diagram. It is boring control infrastructure.

Here is the checklist that matters more than a flashy demo:

– Define an agent charter. Be explicit about what the agent is allowed to do, what it must never do, and what requires human approval.

– Convert actions into typed intents. Do not let raw natural-language impulses call live systems directly.

– Add budget ceilings. Cap spend per task, per user, and per time window.

– Limit concurrency and retries. Most expensive failures come from loops and multiplication, not from one bad call.

– Use fail-closed policies for critical actions. If the policy engine cannot decide, the action should not happen.

– Log every authorization decision. Treat agent actions like security-relevant events, not just app telemetry.

– Separate observability from prevention. Monitoring matters, but alerts after the damage are not a substitute for blocking risky actions before execution.

These are not theoretical niceties. They are the difference between a pilot that impresses a product manager and a system that a legal, finance, or infrastructure team will actually approve for broad use.

The hidden business upside: trust compounds faster than raw capability

There is also a market lesson here. Many teams assume the moat in AI products will come from model quality alone. That is too narrow.

Capability gets copied quickly. Control surfaces do not.

The product that wins in the enterprise is often the one that gives buyers a sane answer to uncomfortable questions:

- Who approved this action?

- What was the budget limit?

- Why did the system call this tool five times?

- Can we replay the decision path?

- Can we restrict one department without slowing the whole platform?

Those answers are not cosmetic. They reduce procurement friction, shorten security review cycles, and make expansion easier after the first deployment. In other words, they influence revenue much earlier than many teams expect.

That is why “authorization for agents” should not be filed away as a back-office concern. It is shaping who can move from proof of concept to standard operating workflow.

What this means for the next year of AI product design

The near future will likely split agent companies into two camps.

The first camp will keep selling the fantasy of general autonomy. Their products will look powerful in demos and fragile under real permissions, real budgets, and real operational edge cases.

The second camp will treat agents more like junior operators inside a controlled business process. These systems may feel less dramatic, but they will be easier to adopt because they respect the simple truth that most organizations do not fear intelligence. They fear uncontrolled execution.

That is why the best agent products over the next year may seem paradoxically stricter. More approvals. More budgets. More explicit scopes. More boring policy checks sitting between the model and the world.

Good. That is how software grows up.

FAQ

Are guardrails enough without better models?

No. Better models still matter. But stronger models without execution controls mostly increase the scale of possible mistakes.

Does this slow products down?

In the short term, yes. In practice, it often speeds adoption because teams can ship bounded autonomy sooner instead of waiting for unrealistic levels of model reliability.

Is monitoring enough?

Usually not. Monitoring tells you what went wrong. Authorization layers decide what is allowed to happen in the first place.

Should every agent use this pattern?

Not every agent needs a heavy policy engine. But any agent that can trigger costs, modify records, move money, touch infrastructure, or create cascading side effects needs stricter control than prompt engineering alone can provide.

The editorial verdict

The interesting part of the Reddit thread is not the specific project. It is the broader pattern it reveals. The AI market is slowly admitting that reasoning is only half the job. The other half is building systems that can say no, slow down, ask for approval, and leave a trail.

That may sound less revolutionary than autonomous agents everywhere. It is also far more likely to survive contact with reality.

References

- Primary Reddit discussion: https://www.reddit.com/r/artificial/comments/1rvdy8f/were_building_a_deterministic_authorization_layer/

– Anthropic, Building effective agents: https://www.anthropic.com/research/building-effective-agents

– Microsoft Learn, Process to build agents across your organization: https://learn.microsoft.com/en-us/azure/cloud-adoption-framework/ai-agents/build-secure-process